Python与CNN的碰撞详解

作者:石奥博123

AlexNet介绍

AlexNet是2012年ISLVRC 2012(ImageNet Large Scale Visual RecognitionChallenge)竞赛的冠军网络,分类准确率由传统的 70%+提升到 80%+。它是由Hinton和他的学生Alex Krizhevsky设计的。也是在那年之后,深度学习开始迅速发展。

idea

(1)首次利用 GPU 进行网络加速训练。

(2)使用了 ReLU 激活函数,而不是传统的 Sigmoid 激活函数以及 Tanh 激活函数。

(3)使用了 LRN 局部响应归一化。

(4)在全连接层的前两层中使用了 Dropout 随机失活神经元操作,以减少过拟合。

过拟合

根本原因是特征维度过多,模型假设过于复杂,参数过多,训练数据过少,噪声过多,导致拟合的函数完美的预测训练集,但对新数据的测试集预测结果差。 过度的拟合了训练数据,而没有考虑到泛化能力。

解决方案

使用 Dropout 的方式在网络正向传播过程中随机失活一部分神经元。

卷积后矩阵尺寸计算公式

经卷积后的矩阵尺寸大小计算公式为: N = (W − F + 2P ) / S + 1

① 输入图片大小 W×W

② Filter大小 F×F

③ 步长 S

④ padding的像素数 P

AlexNet网络结构

| layer_name | kernel_size | kernel_num | padding | stride |

| Conv1 | 11 | 96 | [1, 2] | 4 |

| Maxpool1 | 3 | None | 0 | 2 |

| Conv2 | 5 | 256 | [2, 2] | 1 |

| Maxpool2 | 3 | None | 0 | 2 |

| Conv3 | 3 | 384 | [1, 1] | 1 |

| Conv4 | 3 | 384 | [1, 1] | 1 |

| Conv5 | 3 | 256 | [1, 1] | 1 |

| Maxpool3 | 3 | None | 0 | 2 |

| FC1 | 2048 | None | None | None |

| FC2 | 2048 | None | None | None |

| FC3 | 1000 | None | None | None |

model代码

from tensorflow.keras import layers, models, Model, Sequential

def AlexNet_v1(im_height=224, im_width=224, num_classes=1000):

# tensorflow中的tensor通道排序是NHWC

input_image = layers.Input(shape=(im_height, im_width, 3), dtype="float32") # output(None, 224, 224, 3)

x = layers.ZeroPadding2D(((1, 2), (1, 2)))(input_image) # output(None, 227, 227, 3)

x = layers.Conv2D(48, kernel_size=11, strides=4, activation="relu")(x) # output(None, 55, 55, 48)

x = layers.MaxPool2D(pool_size=3, strides=2)(x) # output(None, 27, 27, 48)

x = layers.Conv2D(128, kernel_size=5, padding="same", activation="relu")(x) # output(None, 27, 27, 128)

x = layers.MaxPool2D(pool_size=3, strides=2)(x) # output(None, 13, 13, 128)

x = layers.Conv2D(192, kernel_size=3, padding="same", activation="relu")(x) # output(None, 13, 13, 192)

x = layers.Conv2D(192, kernel_size=3, padding="same", activation="relu")(x) # output(None, 13, 13, 192)

x = layers.Conv2D(128, kernel_size=3, padding="same", activation="relu")(x) # output(None, 13, 13, 128)

x = layers.MaxPool2D(pool_size=3, strides=2)(x) # output(None, 6, 6, 128)

x = layers.Flatten()(x) # output(None, 6*6*128)

x = layers.Dropout(0.2)(x)

x = layers.Dense(2048, activation="relu")(x) # output(None, 2048)

x = layers.Dropout(0.2)(x)

x = layers.Dense(2048, activation="relu")(x) # output(None, 2048)

x = layers.Dense(num_classes)(x) # output(None, 5)

predict = layers.Softmax()(x)

model = models.Model(inputs=input_image, outputs=predict)

return model

class AlexNet_v2(Model):

def __init__(self, num_classes=1000):

super(AlexNet_v2, self).__init__()

self.features = Sequential([

layers.ZeroPadding2D(((1, 2), (1, 2))), # output(None, 227, 227, 3)

layers.Conv2D(48, kernel_size=11, strides=4, activation="relu"), # output(None, 55, 55, 48)

layers.MaxPool2D(pool_size=3, strides=2), # output(None, 27, 27, 48)

layers.Conv2D(128, kernel_size=5, padding="same", activation="relu"), # output(None, 27, 27, 128)

layers.MaxPool2D(pool_size=3, strides=2), # output(None, 13, 13, 128)

layers.Conv2D(192, kernel_size=3, padding="same", activation="relu"), # output(None, 13, 13, 192)

layers.Conv2D(192, kernel_size=3, padding="same", activation="relu"), # output(None, 13, 13, 192)

layers.Conv2D(128, kernel_size=3, padding="same", activation="relu"), # output(None, 13, 13, 128)

layers.MaxPool2D(pool_size=3, strides=2)]) # output(None, 6, 6, 128)

self.flatten = layers.Flatten()

self.classifier = Sequential([

layers.Dropout(0.2),

layers.Dense(1024, activation="relu"), # output(None, 2048)

layers.Dropout(0.2),

layers.Dense(128, activation="relu"), # output(None, 2048)

layers.Dense(num_classes), # output(None, 5)

layers.Softmax()

])

def call(self, inputs, **kwargs):

x = self.features(inputs)

x = self.flatten(x)

x = self.classifier(x)

return xVGGNet介绍

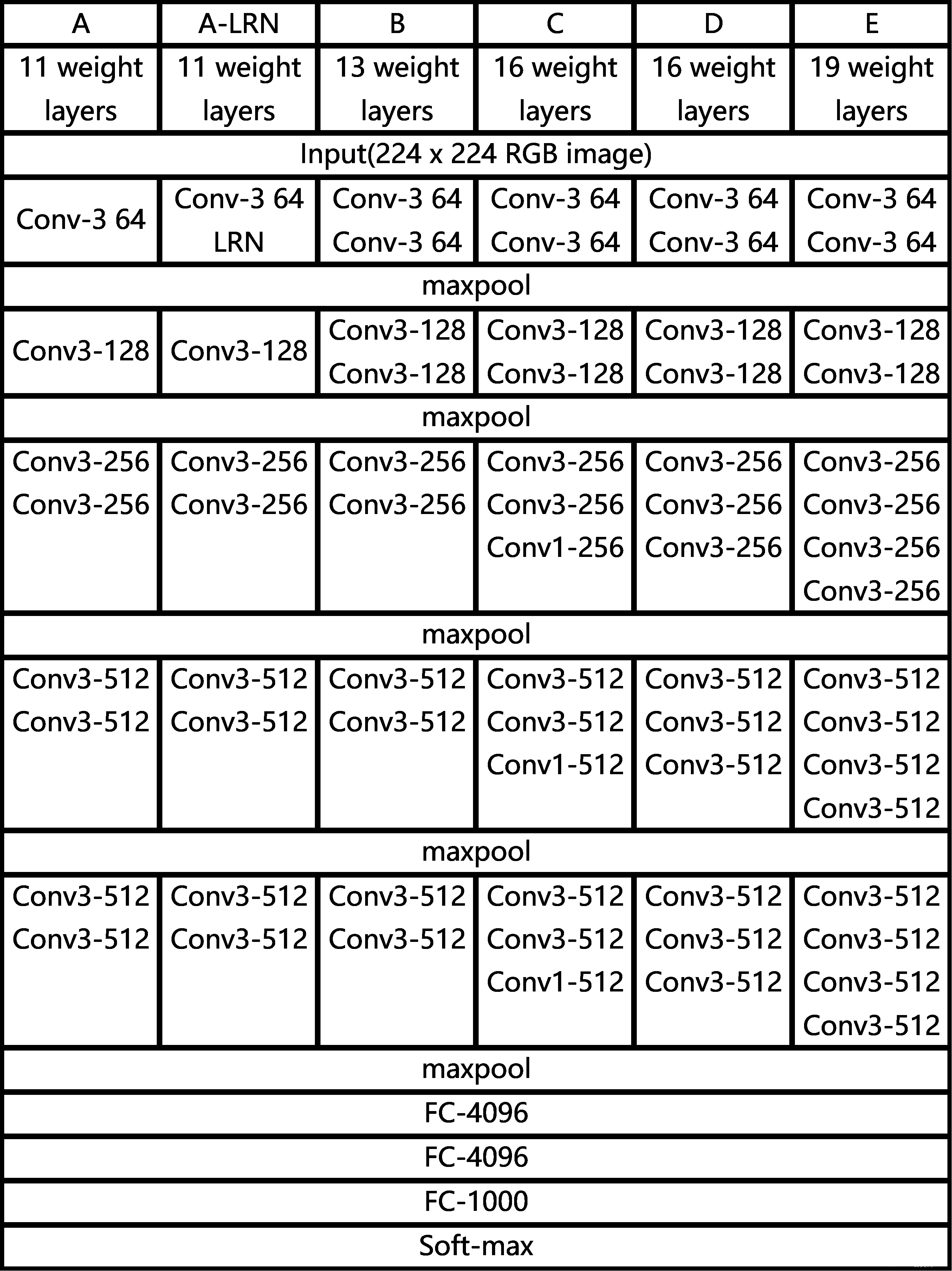

VGG在2014年由牛津大学著名研究组VGG (Visual Geometry Group) 提出,斩获该年ImageNet竞赛中 Localization Task (定位 任务) 第一名 和 Classification Task (分类任务) 第二名。

idea

通过堆叠多个3x3的卷积核来替代大尺度卷积核 (减少所需参数) 论文中提到,可以通过堆叠两个3x3的卷 积核替代5x5的卷积核,堆叠三个3x3的 卷积核替代7x7的卷积核。

假设输入输出channel为C

7x7卷积核所需参数:7 x 7 x C x C = 49C^2

3x3卷积核所需参数:3 x 3 x C x C + 3 x 3 x C x C + 3 x 3 x C x C = 27C^2

感受野

在卷积神经网络中,决定某一层输出 结果中一个元素所对应的输入层的区域大 小,被称作感受野(receptive field)。通俗 的解释是,输出feature map上的一个单元 对应输入层上的区域大小。

感受野计算公式

F ( i ) =(F ( i + 1) -1) x Stride + Ksize

F(i)为第i层感受野, Stride为第i层的步距, Ksize为卷积核或采样核尺寸

VGGNet网络结构

model代码

from tensorflow.keras import layers, Model, Sequential

#import sort_pool2d

import tensorflow as tf

CONV_KERNEL_INITIALIZER = {

'class_name': 'VarianceScaling',

'config': {

'scale': 2.0,

'mode': 'fan_out',

'distribution': 'truncated_normal'

}

}

DENSE_KERNEL_INITIALIZER = {

'class_name': 'VarianceScaling',

'config': {

'scale': 1. / 3.,

'mode': 'fan_out',

'distribution': 'uniform'

}

}

def VGG(feature, im_height=224, im_width=224, num_classes=1000):

# tensorflow中的tensor通道排序是NHWC

input_image = layers.Input(shape=(im_height, im_width, 3), dtype="float32")

x = feature(input_image)

x = layers.Flatten()(x)

x = layers.Dropout(rate=0.5)(x)

x = layers.Dense(2048, activation='relu',

kernel_initializer=DENSE_KERNEL_INITIALIZER)(x)

x = layers.Dropout(rate=0.5)(x)

x = layers.Dense(2048, activation='relu',

kernel_initializer=DENSE_KERNEL_INITIALIZER)(x)

x = layers.Dense(num_classes,

kernel_initializer=DENSE_KERNEL_INITIALIZER)(x)

output = layers.Softmax()(x)

model = Model(inputs=input_image, outputs=output)

return model

def make_feature(cfg):

feature_layers = []

for v in cfg:

if v == "M":

feature_layers.append(layers.MaxPool2D(pool_size=2, strides=2))

# elif v == "S":

# feature_layers.append(layers.sort_pool2d(x))

else:

conv2d = layers.Conv2D(v, kernel_size=3, padding="SAME", activation="relu",

kernel_initializer=CONV_KERNEL_INITIALIZER)

feature_layers.append(conv2d)

return Sequential(feature_layers, name="feature")

cfgs = {

'vgg11': [64, 'M', 128, 'M', 256, 256, 'M', 512, 512, 'M', 512, 512, 'M'],

'vgg13': [64, 64, 'M', 128, 128, 'M', 256, 256, 'M', 512, 512, 'M', 512, 512, 'M'],

'vgg16': [64, 64, 'M', 128, 128, 'M', 256, 256, 256, 'M', 512, 512, 512, 'M', 512, 512, 512, 'M'],

'vgg19': [64, 64, 'M', 128, 128, 'M', 256, 256, 256, 256, 'M', 512, 512, 512, 512, 'M', 512, 512, 512, 512, 'M'],

}

def vgg(model_name="vgg16", im_height=224, im_width=224, num_classes=1000):

assert model_name in cfgs.keys(), "not support model {}".format(model_name)

cfg = cfgs[model_name]

model = VGG(make_feature(cfg), im_height=im_height, im_width=im_width, num_classes=num_classes)

return model到此这篇关于Python与CNN的碰撞详解的文章就介绍到这了,更多相关Python CNN内容请搜索脚本之家以前的文章或继续浏览下面的相关文章希望大家以后多多支持脚本之家!